GDPR compliance in AI-powered CRM tools is mandatory for businesses handling EU residents’ data. Non-compliance can lead to fines of up to €20 million or 4% of global annual revenue. Here’s what you need to know:

- GDPR Basics: Applies to any company processing EU residents’ data, regardless of location.

- AI Challenges: AI-driven CRMs process large datasets, raising risks like data breaches, biased decisions, and security vulnerabilities.

- Key GDPR Principles: Systems must ensure data minimization, transparency, lawful processing, and storage limits.

- Compliance Steps: Conduct data audits, manage consent effectively, and enable data subject rights like access, rectification, and erasure.

- Best Practices: Regularly review AI models, update Data Protection Impact Assessments (DPIAs), and train staff on GDPR requirements.

Quick Tips

- Use tools with built-in privacy features like Salesforce’s "Einstein Trust Layer" or HubSpot’s "GDPR Delete."

- Implement human oversight for AI decisions.

- Ensure privacy by design and document compliance efforts.

GDPR compliance isn’t just about avoiding fines – it’s about protecting customer trust and data integrity.

GDPR Compliance & Traceability for Enterprise LLMs – AI You Can Trust | Uplatz

Core GDPR Principles for AI-Powered CRM Systems

The General Data Protection Regulation (GDPR) establishes key principles for handling personal data, and these apply directly to AI-powered CRM systems. These principles form a framework to address the challenges of using machine learning for customer relationship management. Non-compliance can lead to penalties of up to £17.5 million or 4% of your total worldwide annual turnover, whichever is higher. Here’s a closer look at how these principles guide the design of AI-driven CRMs.

Data Protection by Design and by Default

Data protection must be built into every stage of your CRM system’s design and operation. Article 25 of the GDPR mandates this proactive approach. According to the Information Commissioner’s Office (ICO):

"Data protection by design is about considering data protection and privacy issues upfront in everything you do".

For AI-powered CRMs, this could involve techniques like pseudonymization, where identifiable information is replaced with artificial identifiers, reducing the risk of exposing sensitive data. However, the complexity of machine learning frameworks can increase vulnerabilities, making this principle challenging to implement effectively.

Default settings should limit data collection, storage duration, and access without requiring users to adjust privacy settings manually. If you’re balancing privacy with prediction accuracy, document these trade-offs to demonstrate compliance and accountability.

Lawfulness, Transparency, and Fair Processing

Every stage of your CRM’s AI operations must have a lawful basis. For example, you might use "legitimate interests" to train a lead-scoring algorithm but need explicit consent to send marketing messages based on those scores.

Transparency is equally important. Your privacy notices must clearly explain how the AI processes customer data and the logic behind automated decisions. However, striking the right balance is crucial – you need to provide enough detail for customers to understand, without exposing proprietary algorithms or creating security risks.

The principle of fairness requires that AI models avoid biased outcomes and maintain statistical accuracy. Inaccurate predictions can lead to unfair treatment, violating GDPR guidelines. Regularly monitor your models for accuracy and bias, especially when they influence decisions that significantly affect individuals.

Data Minimization and Storage Limits

AI systems thrive on large datasets, but GDPR Article 5(1)(c) emphasizes that:

"Personal data shall be adequate, relevant and limited to what is necessary in relation to the purposes for which they are processed".

For AI-driven CRMs, this means identifying only the essential features needed for reliable predictions. Strategies like using synthetic data, applying data masking, or adopting privacy-enhancing technologies (PETs) such as differential privacy can help you train models effectively while minimizing exposure to real customer data.

Storage limitations are just as critical. Personal data, including training datasets and temporary files, must be deleted when they are no longer needed. This might involve securely removing data that no longer contributes to your model’s purpose or predictive accuracy. Automated deletion processes and detailed data retention schedules can help ensure compliance.

| GDPR Principle | CRM Application |

|---|---|

| Lawfulness | Must have a valid basis for training models and making automated predictions |

| Transparency | Privacy notices should clearly explain how data is used and decisions are made |

| Data Minimization | Use only the minimum features required for reliable predictions |

| Storage Limitation | Delete training datasets and intermediate files once they are no longer needed |

| Accountability | Keep an audit trail of all data movements and model training versions |

How to Ensure GDPR Compliance in AI-Driven CRM Tools

To align your AI-powered CRM with GDPR requirements, follow these practical steps grounded in key GDPR principles.

Conduct a Data Audit

Begin by mapping out every stage of your AI data lifecycle. This means identifying all personal data your CRM handles – from the training data used to develop AI models to the inputs it processes and the outputs it generates, like customer profiles or scores. If any of this data can identify an individual, it falls under GDPR regulations.

For organizations that meet the criteria, documenting processing activities is mandatory. According to Article 30 of the UK GDPR, this includes maintaining records that detail processing purposes, data categories, recipients, and retention schedules.

Categorize your data types carefully. Separate raw personal data (e.g., names, emails) from "pre-processed" data used in machine learning and AI-generated inferences. Even transformed or normalized data is still considered "processing" under GDPR, meaning compliance obligations remain.

Check whether your AI model stores personal data, as some models inherently do, while others might unintentionally "leak" data during training. This is crucial for fulfilling individual rights like data erasure, which may require re-training the model.

Leverage automated tools and input from various departments to conduct a thorough data audit. Use standardized checklists to evaluate data relevance at each stage of development before deploying the system. Once you’ve mapped your data, focus on managing consent and automated decision-making processes.

Manage Consent and Automated Decisions

Under Article 22, GDPR requires explicit consent for solely automated decisions that have legal or similarly significant effects. This applies to CRM functionalities like credit scoring, fraud detection, or determining eligibility.

Use active opt-ins instead of pre-checked boxes. For example, require users to tick an empty box or click an "I consent" button. Keep consent requests for AI processing clear, prominent, and separate from general terms and conditions to ensure they are "specific and informed."

"The data subject shall have the right to withdraw his or her consent at any time. The withdrawal of consent shall not affect the lawfulness of processing based on consent before its withdrawal." – Article 7, UK GDPR

Make withdrawing consent as simple as giving it. If consent is collected with a single click, allow withdrawal through the same one-step process. To ensure consent remains valid, the ICO advises refreshing it at least every two years.

Explain how automated decisions work and their potential consequences. Use layered privacy notices, including "just-in-time" prompts that appear when users input their data. However, avoid overcomplicating explanations – if the system is too complex to explain, it might also be too complex to review or contest effectively.

Introduce a human-in-the-loop process for reviewing automated decisions. The ICO emphasizes:

"Human intervention should involve a review of the decision, which must be carried out by someone with the appropriate authority and capability to change that decision."

Train staff to review and override AI decisions when necessary. With consent management in place, focus on establishing robust systems for handling data subject rights.

Handle Data Subject Rights Requests

Your CRM must be equipped to process requests for access, rectification, erasure, and portability. Responses must be provided within one month, and all requests – verbal or written – should be logged, including due dates, actions taken, and final resolutions.

Use automated tools to locate personal data across multiple systems. This includes customer data as well as system-generated logs like user activity or search queries. For example, Microsoft’s updated guidance for Dynamics 365 and Power Platform (December 2025) outlines tools like the "Person search report" for locating data, "Advanced Find Search" for record identification, and the "Microsoft Data Log Export" tool for retrieving system-generated logs. For erasure, the system supports "hard deletes" of contact records and batch processes to remove interaction logs.

Ensure secure intake portals with identity verification for handling requests. Use jurisdiction-compliant forms and export data in machine-readable formats like JSON, CSV, or XML for access and portability requests.

When addressing rectification requests, differentiate between factual data (which must be corrected) and AI-generated predictions (e.g., scores or inferences), which represent probabilities rather than inaccuracies. If data is rectified or erased, notify any third-party recipients – like marketing tools or data processors – of the changes. Ensure that erasure requests extend to both live systems and backups.

| Right | Process in AI-Driven CRM | Technical Requirement |

|---|---|---|

| Access | Discover data in profiles and system logs | Search/Discovery tools, Secure Portals |

| Rectification | Correct inaccurate facts in CRM records | Manual/Bulk edit, API updates |

| Erasure | Remove data from training sets and live records | Hard delete, Batch jobs, Backup purging |

| Portability | Export provided data in machine-readable form | CSV, XML, or JSON export functionality |

| Automated Decision Rights | Provide logic explanation and human review | Interpretability tools, Human-in-the-loop |

For AI models, ensure compliance by addressing personal data in training sets, even if re-training is required. Logs such as IP addresses and device information may qualify as personal data under GDPR. While they can be accessed, exported, or deleted, they generally cannot be rectified as they serve as factual system records.

sbb-itb-8421839

GDPR-Compliant AI-Driven CRM Tools

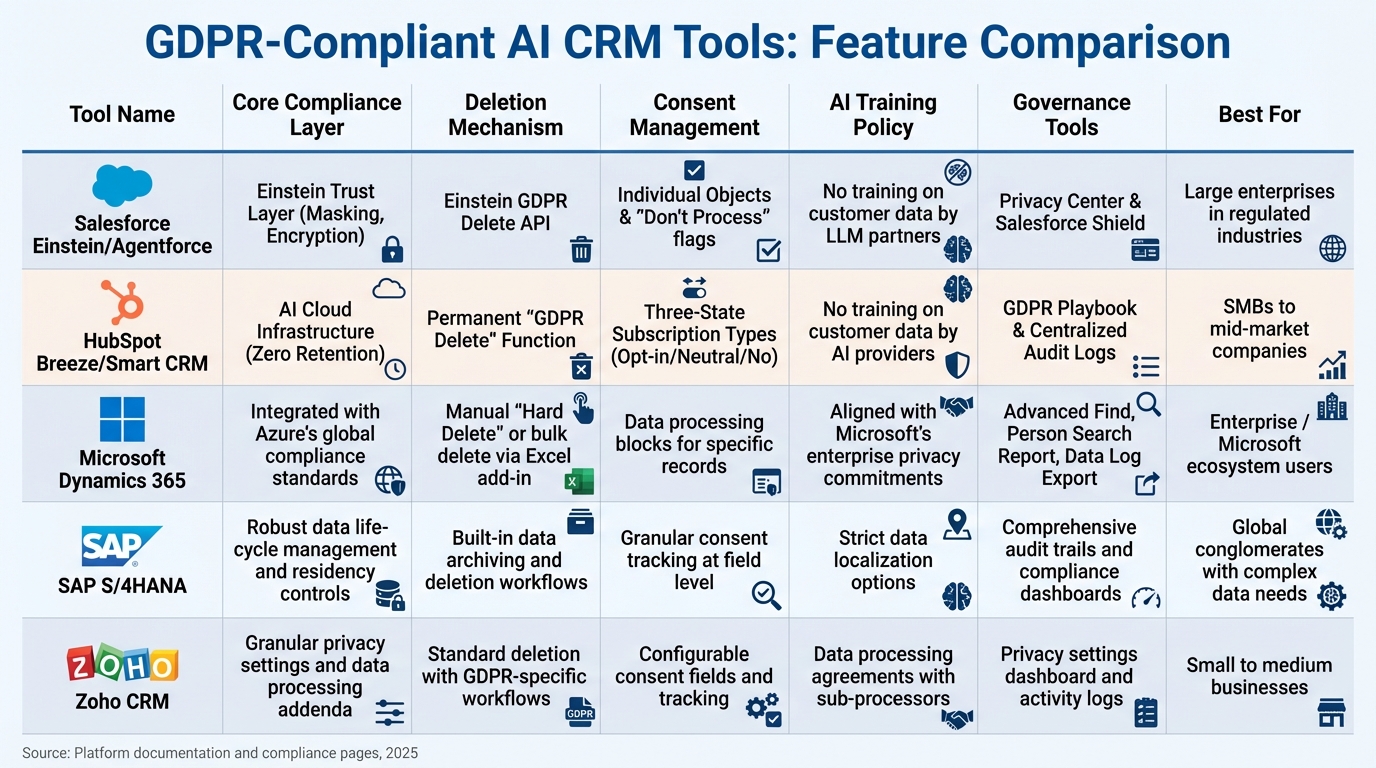

GDPR-Compliant AI CRM Tools Comparison Chart

When it comes to GDPR compliance, choosing the right AI-powered CRM is a critical step. These platforms need to balance automation with strong privacy protections. The market has adapted to GDPR by embedding compliance features directly into CRM systems. As of 2025, 61% of desk workers are either using or planning to use generative AI in their workflows. However, 64% of workers would use generative AI more often if they trusted its safety and security. This highlights the importance of selecting a CRM that prioritizes compliance, especially when data mishandling costs can average $14.8 million.

Leading CRM platforms include features like data masking, encryption, and zero retention policies. For instance, Salesforce introduced its "Einstein Trust Layer", which ensures that prompts and responses are not stored by third-party LLM providers. By the end of 2024, Salesforce had secured over 1,000 "Agentforce" deals, showing businesses’ trust in their compliance measures. Similarly, HubSpot employs its Breeze AI infrastructure, which contractually prohibits providers like OpenAI from using customer data for model training and enforces zero data retention. These examples illustrate how GDPR safeguards are built into top platforms.

"The data we manage does not belong to Salesforce – it belongs to the customer." – Salesforce

Both Salesforce and HubSpot go beyond standard CRM functions to offer specialized deletion tools. Salesforce provides the Einstein GDPR Delete API, which permanently removes individual user data from AI storage. HubSpot’s "GDPR Delete" feature ensures that once a contact is deleted, their information is permanently erased and will not be tracked if they re-engage later. Additionally, these platforms use three-state consent models to capture affirmative consent as required by GDPR.

Comparison of GDPR-Compliant CRM Tools

When evaluating CRM platforms, it’s essential to focus on how they address AI-specific compliance needs rather than just general features. Below is a comparison of five major tools based on their compliance mechanisms, deletion capabilities, consent management, AI training policies, and governance tools.

| Tool | Core Compliance Layer | Deletion Mechanism | Consent Management | AI Training Policy | Governance Tools | Best For |

|---|---|---|---|---|---|---|

| Salesforce Einstein/Agentforce | Einstein Trust Layer (Masking, Encryption) | Einstein GDPR Delete API | Individual Objects & "Don’t Process" flags | No training on customer data by LLM partners | Privacy Center & Salesforce Shield | Large enterprises in regulated industries |

| HubSpot Breeze/Smart CRM | AI Cloud Infrastructure (Zero Retention) | Permanent "GDPR Delete" Function | Three-State Subscription Types (Opt-in/Neutral/No) | No training on customer data by AI providers | GDPR Playbook & Centralized Audit Logs | SMBs to mid-market companies |

| Microsoft Dynamics 365 | Integrated with Azure’s global compliance standards | Manual "Hard Delete" or bulk delete via Excel add-in | Data processing blocks for specific records | Aligned with Microsoft’s enterprise privacy commitments | Advanced Find, Person Search Report, Data Log Export | Enterprise / Microsoft ecosystem users |

| SAP S/4HANA | Robust data lifecycle management and residency controls | Built-in data archiving and deletion workflows | Granular consent tracking at field level | Strict data localization options | Comprehensive audit trails and compliance dashboards | Global conglomerates with complex data needs |

| Zoho CRM | Granular privacy settings and data processing addenda | Standard deletion with GDPR-specific workflows | Configurable consent fields and tracking | Data processing agreements with sub-processors | Privacy settings dashboard and activity logs | Small to medium businesses |

Pricing also plays a role in determining which CRM is the right fit. HubSpot offers a range of pricing options, starting at $0/month for basic contact management, $15–$20/month per seat for the Starter tier, $50/month per seat for Professional (which includes features like duplicate record merging), and $75/month per seat for Enterprise, which includes AI insights and SSO. On the other hand, Salesforce handles AI-specific features like Agentforce through custom paid deals, rather than transparent pricing tiers.

When selecting a CRM, it’s crucial to look at both compliance features and pricing models. Also, review sub-processor pages to confirm where data is processed and ensure that Standard Contractual Clauses (SCCs) or Data Privacy Framework agreements are in place for cross-border transfers. This ensures your organization remains GDPR-compliant while addressing its specific operational needs.

Best Practices for Maintaining GDPR Compliance

Staying GDPR-compliant isn’t a one-time task – it’s an ongoing effort that needs to adapt as your AI-driven CRM evolves. The Information Commissioner’s Office (ICO) emphasizes that senior management and Data Protection Officers (DPOs) hold ultimate accountability for AI governance. These responsibilities shouldn’t rest solely with technical teams. As your business grows and your CRM handles more customer data, having structured systems in place helps identify compliance gaps early, preventing costly violations. This proactive approach ensures thorough oversight of your systems.

Monitor and Govern AI Systems

Your Data Protection Impact Assessment (DPIA) should be treated as a living document, updated regularly. This is especially important to account for concept drift – when an AI system’s performance changes over time due to shifts in customer demographics or behavior. For instance, if your CRM was trained on data from 2022 but your customer base has significantly changed by 2026, the model’s predictions might become less accurate or even biased. Regular DPIA reviews can help you spot these shifts early.

The ICO also recommends that human intervention be a key part of AI decision-making. Reviewers must have the authority and ability to override AI decisions. Monitoring how often these overrides occur can reveal if your model needs retraining or if there are gaps in your training data. Additionally, be vigilant against privacy attacks. Implement safeguards like rate-limiting on APIs to minimize the risk of "black box" attacks, where malicious users exploit your system by sending numerous queries.

Another critical step is mapping your entire supply chain. Document every AI system update, security patch, and software reconfiguration, and reassess privacy risks before rolling out new releases. A dedicated steering group can help ensure compliance across departments, while automated audit trails provide continuous logs of access, modifications, and processing activities.

While system oversight is vital, equally important is ensuring your team is well-trained on GDPR requirements.

Train Employees on GDPR Requirements

Effective compliance requires more than just system checks – it demands a well-informed team. Tailor GDPR and AI ethics training programs to two key groups: compliance-focused roles (like DPOs, risk managers, and senior management) and technical specialists (such as machine learning developers, data scientists, and IT risk managers). Employees should be trained to spot "outliers", or individuals whose circumstances differ significantly from the training data, as these can lead to AI errors. They also need to understand how to retrieve personal data embedded within a model’s logic, such as in Support Vector Machines.

Empower your staff to challenge or override AI decisions when necessary. Training should include how to navigate review interfaces and interpret the data the AI used to reach its conclusions. Use your DPIAs to document that all team members involved in the AI lifecycle have received appropriate training on data protection issues.

As AI technologies and legal frameworks evolve – like the upcoming UK Data (Use and Access) Act slated for June 19, 2025 – ongoing training is critical. Regular upskilling ensures your team stays aligned with the latest compliance standards and regulations.

Conclusion

Ensuring GDPR compliance in AI-powered CRM tools isn’t just about avoiding fines – it’s about building trust with your customers. With 59% of consumers expressing doubts about AI’s security, businesses that prioritize transparency and data protection can gain a real edge over competitors. The strategies outlined here – like regular data audits, strong human oversight, and detailed documentation – turn compliance into a key driver of customer confidence.

Consider this: mishandling data costs organizations an average of $14.8 million, and 64% of potential users would embrace AI if they were assured of its security. As Celestine Bahr, Director Legal, Compliance & Data Privacy at Usercentrics, aptly states:

"Embedding privacy into the way you manage relationships with customers strengthens confidence in your brand, demonstrates accountability, and builds a reputation for reliability in a world where trust is increasingly valuable".

The takeaway? Strong compliance practices don’t just protect you – they enhance every aspect of your AI-driven CRM operations. With the EU AI Act rolling out through August 2027 and new regulations on the horizon, staying ahead means evolving alongside the technology. By focusing on principles like privacy by design, data minimization, and human oversight, you can create AI systems that not only meet today’s standards but are ready for tomorrow’s challenges. Incorporating measures like role-based access controls, automated data retention policies, and ongoing GDPR and AI ethics training ensures your system remains secure and earns the trust of your customers for the long haul.

FAQs

How do AI-powered CRM tools comply with GDPR requirements for data minimization and storage limits?

AI-powered CRM tools align with GDPR requirements by sticking to strict data minimization practices. They gather only the information needed for specific tasks and follow clear retention policies to delete data when it’s no longer required. Many of these tools also incorporate advanced privacy technologies like differential privacy and federated learning to safeguard sensitive data.

To prevent excessive data storage, AI-driven CRM systems often automate data audits and purges, ensuring unnecessary records don’t pile up. These measures allow businesses to leverage AI tools while protecting user privacy and staying compliant with GDPR regulations.

How can businesses effectively manage user consent in AI-powered CRM systems to comply with GDPR?

To comply with GDPR, businesses need to think of user consent as an ongoing responsibility, not a one-and-done task. Start by designing consent requests that are clear, straightforward, and completely separate from terms and conditions. Make sure users provide a positive opt-in – so no pre-checked boxes – and clearly outline the purpose of collecting their data, along with any third parties who may be involved. Also, make it just as simple for users to withdraw their consent as it is to give it, with clear instructions on how to do so.

Equally important is keeping a detailed record of all consent interactions. This means documenting who gave their consent, the exact wording they agreed to, the date and time (e.g., April 15, 2025), and how the consent was obtained (like through a web form or email). Store this information securely in a system that’s easy to search, so you’re prepared to handle audits or withdrawal requests quickly. Make it a habit to regularly review your consent procedures, and if you ever change the purpose of data collection, inform users and get their consent again.

On the technical side, configure your CRM to ensure any data marked as requiring consent is only processed when valid consent exists. If a user withdraws their consent, their data should be promptly deleted. This not only keeps your processes GDPR-compliant but also strengthens user trust.

How do AI-powered CRM tools comply with GDPR when handling data access and deletion requests?

AI-powered CRM systems simplify the process of adhering to GDPR regulations, particularly when it comes to managing data subject rights like access and deletion. These platforms make it easy for individuals to request their personal data or ask for its removal. Once such a request is made, the system gathers all relevant personal information, including data processed by AI models, and either delivers it in a portable format or ensures it is securely deleted or anonymized.

Additionally, these tools ensure that businesses process requests within the GDPR-required one-month deadline. They also maintain a detailed audit log to promote transparency and accountability. By automating these tasks, AI-driven CRMs enable companies to handle compliance requirements with greater efficiency and security.